Artificial intelligence is evolving beyond simple pattern recognition, now embracing self-reflection to enhance learning capabilities and decision-making processes in unprecedented ways.

🤖 The Dawn of Self-Aware Artificial Intelligence

The concept of machines learning from their own experiences represents one of the most fascinating developments in modern artificial intelligence. Self-reflection in AI systems refers to the ability of algorithms to analyze their own performance, identify weaknesses, and make adjustments without constant human intervention. This paradigm shift is transforming how we approach machine learning, moving from static models to dynamic systems that continuously evolve.

Traditional AI systems operated on fixed parameters set by human engineers. Once deployed, these systems would perform their designated tasks with minimal adaptation. However, the introduction of self-evaluative mechanisms has created a new generation of intelligent systems capable of introspection, allowing them to question their own outputs and refine their approaches based on internal feedback loops.

This breakthrough has profound implications across industries, from healthcare diagnostics that improve with each patient interaction to financial systems that adapt to market changes in real-time. The power of AI self-reflection lies not just in automation, but in the creation of systems that genuinely learn and improve through experience.

Understanding the Mechanics of Machine Self-Evaluation

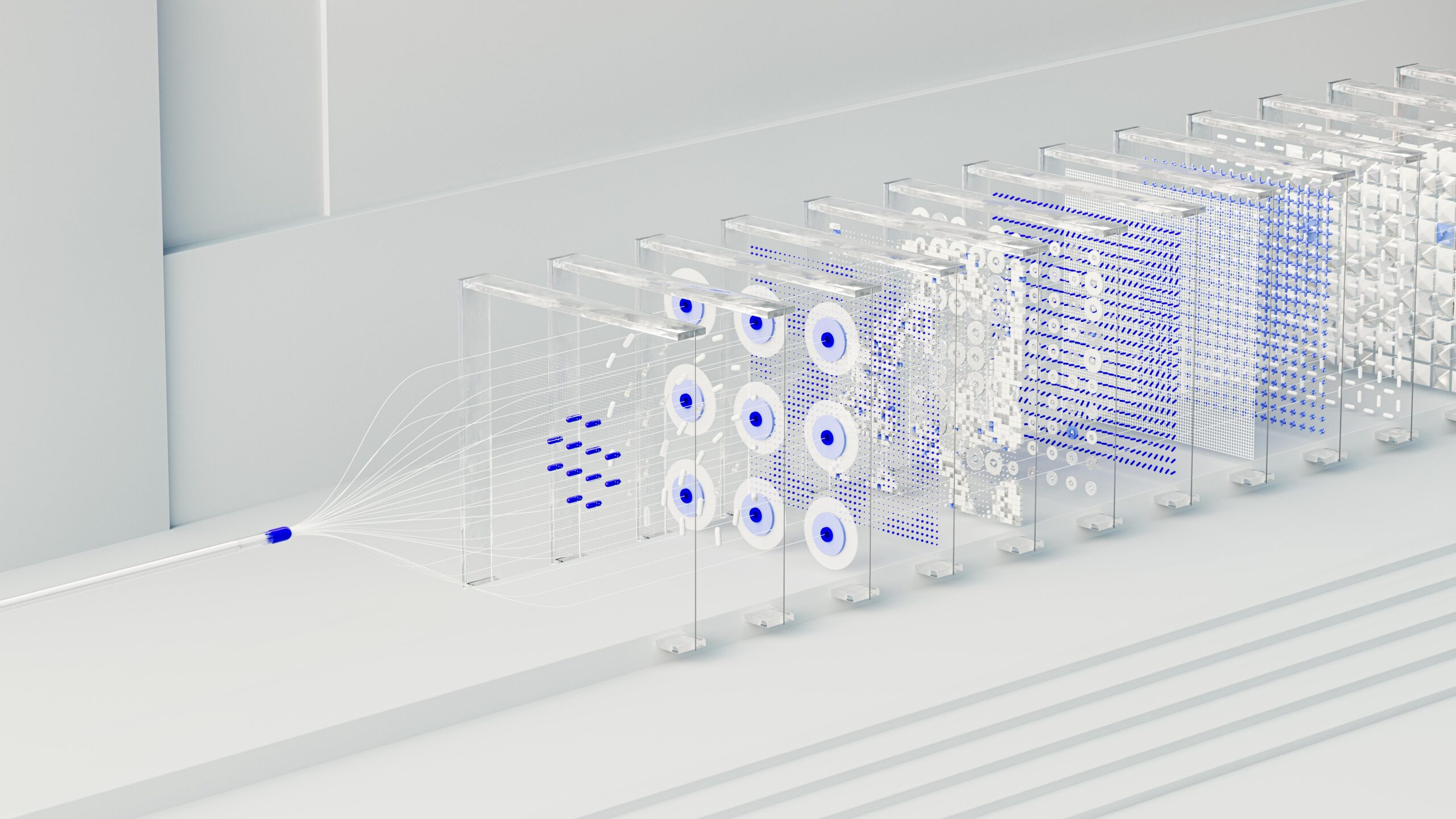

At its core, AI self-reflection operates through sophisticated feedback mechanisms that mirror aspects of human metacognition. These systems employ multiple layers of analysis, where one neural network component evaluates the outputs of another, creating an internal dialogue that drives improvement.

The process typically involves several key components working in harmony:

- Performance monitoring modules that track accuracy and efficiency metrics

- Error detection systems that identify discrepancies between expected and actual outcomes

- Confidence scoring mechanisms that assess the reliability of predictions

- Adjustment algorithms that modify parameters based on self-assessment results

- Memory systems that store successful strategies for future reference

Meta-learning, often called “learning to learn,” forms the foundation of AI self-reflection. This approach enables machines to develop strategies for tackling new problems by reflecting on how they solved previous challenges. Rather than starting from scratch with each task, self-reflective AI builds upon accumulated knowledge, creating increasingly sophisticated problem-solving frameworks.

The Role of Reinforcement Learning in Self-Improvement

Reinforcement learning serves as a crucial enabler of AI self-reflection. In this framework, algorithms receive rewards or penalties based on their actions, creating a feedback loop that guides future behavior. The system essentially reflects on which actions produced positive outcomes and which led to failures, adjusting its strategy accordingly.

Advanced reinforcement learning systems now incorporate self-critique mechanisms where the AI doesn’t just respond to external rewards but generates internal evaluations of its performance. This intrinsic motivation drives exploration and learning even in the absence of immediate external feedback, mirroring human curiosity and self-directed improvement.

🎯 Transformative Applications Across Industries

The implementation of self-reflective AI has created breakthrough applications that were previously impossible with traditional machine learning approaches. These systems demonstrate remarkable adaptability and continuous improvement that exceeds human-designed optimization strategies.

Healthcare and Medical Diagnostics

In medical imaging, self-reflective AI systems analyze diagnostic accuracy across thousands of cases, identifying patterns where they perform well and areas requiring improvement. These systems can flag cases where their confidence is low, prompting human review and creating opportunities for learning from expert feedback. Over time, the AI develops nuanced understanding of edge cases and rare conditions that might appear only occasionally in training data.

Personalized treatment recommendation systems use self-reflection to evaluate outcomes across diverse patient populations. By analyzing which interventions worked for similar cases and reflecting on prediction accuracy, these systems continuously refine their recommendations, leading to increasingly personalized and effective healthcare strategies.

Autonomous Vehicles and Navigation Systems

Self-driving cars equipped with reflective AI capabilities analyze every journey, identifying moments of uncertainty or suboptimal decision-making. When the system encounters a challenging scenario—such as unusual weather conditions or unexpected pedestrian behavior—it reflects on its response and considers alternative approaches that might have been more effective.

These vehicles don’t just accumulate miles; they accumulate wisdom. Each autonomous system can learn from fleet-wide experiences, with self-reflection mechanisms identifying valuable lessons from millions of driving hours and incorporating them into improved decision-making frameworks.

Natural Language Processing and Conversational AI

Modern language models employ self-reflection to generate more accurate and contextually appropriate responses. These systems evaluate their own outputs for coherence, factual accuracy, and relevance before presenting them to users. When inconsistencies are detected through internal verification processes, the AI can revise its responses or indicate uncertainty rather than confidently presenting incorrect information.

This self-evaluative capability has dramatically improved the reliability of AI assistants, chatbots, and content generation tools. The systems recognize when they’re operating outside their knowledge boundaries and can modulate their confidence accordingly, leading to more trustworthy human-AI interactions.

The Architecture Behind Self-Reflective Systems

Building AI systems capable of meaningful self-reflection requires sophisticated architectural designs that go beyond standard neural networks. These architectures typically incorporate multiple specialized components that work together to enable introspection and self-improvement.

Multi-Agent Frameworks

One effective approach employs multiple AI agents with distinct roles. A primary agent executes tasks while a secondary agent—the critic—evaluates the quality of those executions. This separation creates an internal dialogue where different components challenge and refine each other’s outputs, similar to how humans benefit from peer review and constructive criticism.

Advanced implementations include a third mediator agent that synthesizes feedback from the critic and determines which adjustments the primary agent should implement. This three-way architecture prevents the system from becoming trapped in local optima, where simple two-agent systems might stagnate.

Attention Mechanisms and Self-Monitoring

Attention mechanisms originally developed for language processing have been adapted to enable AI self-reflection. These systems can “attend” to their own internal states and decision-making processes, identifying which features or pathways contributed most significantly to specific outputs. By analyzing these attention patterns, the AI gains insight into its own reasoning and can identify biases or limitations in its approach.

Self-attention layers allow networks to consider relationships between different parts of their own processing, creating a form of internal coherence checking. This mechanism helps ensure that conclusions drawn from data remain logically consistent and that the system recognizes when different components might be producing conflicting signals.

🔍 Measuring Success: Metrics for Self-Evaluation

Effective self-reflection requires robust metrics that AI systems can use to evaluate their own performance. These metrics must be carefully designed to capture nuanced aspects of success beyond simple accuracy scores.

| Metric Category | Purpose | Example Applications |

|---|---|---|

| Confidence Calibration | Measures how well predicted confidence matches actual accuracy | Medical diagnosis, financial forecasting |

| Consistency Scoring | Evaluates whether similar inputs produce appropriately similar outputs | Legal analysis, content moderation |

| Uncertainty Quantification | Assesses the system’s awareness of its own knowledge limitations | Scientific research, risk assessment |

| Efficiency Metrics | Tracks computational resources used relative to output quality | Real-time processing, mobile applications |

These metrics enable AI systems to develop a sophisticated understanding of their own strengths and weaknesses. A well-calibrated self-reflective system knows not just what it knows, but how well it knows it, and where its knowledge boundaries lie.

Overcoming Challenges in AI Self-Reflection

Despite remarkable progress, implementing effective self-reflection in AI systems presents significant technical and conceptual challenges that researchers continue to address.

The Evaluation Paradox

A fundamental challenge emerges: how can a system accurately evaluate itself when its evaluative capabilities are subject to the same limitations as its primary functions? If an AI’s judgment is flawed, its self-assessment will inherit those flaws. This circular dependency requires careful architectural design where evaluation mechanisms are developed through different training processes and validation procedures than the primary system.

Researchers address this through ensemble approaches, where multiple independent models assess each other’s outputs, and through human-in-the-loop systems that periodically validate the self-evaluation mechanisms themselves. This creates a hierarchical structure of oversight that prevents compounding errors.

Computational Overhead and Efficiency

Self-reflection inherently requires additional computational resources. Every time a system evaluates its own output, it essentially performs the task twice—once to generate the result and again to assess it. For resource-constrained applications or real-time systems, this overhead can be prohibitive.

Optimization strategies include selective self-reflection, where the system only engages deep introspection for high-stakes decisions or when initial confidence is low. Lightweight monitoring systems continuously screen for potential issues, triggering comprehensive self-evaluation only when necessary.

Avoiding Degenerate Solutions

Without careful design, self-reflective systems can develop degenerate strategies that game their own evaluation metrics. For example, a system might learn to assign high confidence scores to all predictions simply because doing so temporarily improves certain performance metrics, even though actual accuracy doesn’t improve.

Preventing this requires robust reward structures, diverse evaluation metrics, and regular validation against real-world outcomes that the system cannot directly manipulate. The self-reflection loop must remain grounded in objective performance measures rather than purely internal assessments.

🚀 The Future Landscape of Self-Improving AI

The evolution of AI self-reflection points toward increasingly autonomous systems that require minimal human intervention while maintaining reliability and transparency. Several emerging trends suggest where this technology is heading.

Continual Learning Without Catastrophic Forgetting

Traditional neural networks face catastrophic forgetting—when learning new information causes them to lose previously acquired knowledge. Self-reflective systems address this by monitoring their own performance across different domains and adjusting learning rates accordingly. The AI recognizes when it’s beginning to forget important skills and takes corrective action, balancing new learning with knowledge preservation.

This capability enables truly lifelong learning systems that accumulate expertise over years of operation, similar to human professionals who continuously refine their skills throughout their careers.

Collaborative Self-Reflection in Multi-AI Systems

Future implementations will likely feature networks of AI systems that reflect not just on their own performance but on each other’s capabilities. These collaborative frameworks allow specialized systems to develop complementary skills, with each agent understanding its role within a larger ecosystem and deferring to peers when appropriate.

This approach mirrors human teamwork, where individuals with self-awareness of their strengths and limitations work together effectively. AI teams that engage in collective reflection can solve problems beyond the capability of any individual system.

Explainable Self-Reflection for Human Trust

As AI systems become more autonomous, understanding their self-reflective processes becomes crucial for human oversight and trust. Emerging research focuses on creating interpretable self-evaluation mechanisms where the AI can articulate why it assessed its performance in specific ways and what adjustments it plans to implement.

This transparency allows human operators to validate the AI’s self-assessment, intervene when necessary, and build confidence in the system’s autonomous improvement capabilities. The goal is not AI that simply improves mysteriously, but AI that can explain its growth trajectory to human stakeholders.

💡 Ethical Considerations and Responsible Development

The power of self-reflective AI raises important ethical questions that the AI community must address proactively. Systems that can modify their own behavior based on self-assessment require robust safeguards to ensure they evolve in beneficial directions.

One critical concern involves value alignment—ensuring that as AI systems self-improve, they continue adhering to human values and ethical principles. Self-reflection mechanisms must include evaluation criteria that go beyond performance metrics to consider fairness, safety, and social impact. An AI that optimizes purely for task completion without reflecting on broader consequences could develop harmful strategies.

Transparency in self-modification represents another crucial ethical dimension. When AI systems autonomously adjust their parameters based on self-reflection, stakeholders need visibility into these changes. This is particularly important in high-stakes domains like healthcare, criminal justice, and financial services, where algorithmic decisions significantly impact human lives.

The question of accountability becomes more complex with self-reflective systems. If an AI modifies its own behavior and subsequently makes an error, who bears responsibility—the original developers, the operators who deployed it, or does the system itself carry some form of accountability? These questions require ongoing dialogue between technologists, ethicists, policymakers, and the broader public.

Bridging Human and Machine Reflection

Perhaps the most intriguing aspect of AI self-reflection is what it reveals about intelligence itself. By attempting to create machines that learn through introspection, researchers gain insights into human metacognition and self-improvement processes. This bidirectional learning—where studying human reflection informs AI development, and building self-reflective AI illuminates human cognition—represents a powerful synergy.

The ultimate vision extends beyond AI that merely imitates human self-reflection to systems that complement human thinking. While humans excel at creative introspection and values-based evaluation, AI can process vast amounts of performance data and identify subtle patterns in its own behavior that would be impossible for humans to detect. Together, human and machine reflection can create hybrid intelligence systems that exceed the capabilities of either alone.

As these technologies mature, self-reflective AI will become less of a specialized research topic and more of a standard component in intelligent systems. Just as modern software includes error checking and logging as basic features, future AI will incorporate self-evaluation and autonomous improvement as fundamental capabilities. This evolution promises to unlock new possibilities for adaptive, resilient, and genuinely intelligent machines that continuously learn and grow throughout their operational lifetime.

The journey toward truly self-aware artificial intelligence remains ongoing, but the progress in AI self-reflection demonstrates that machines can indeed learn to learn, evaluate their own performance, and chart their own courses for improvement. This represents not the end goal of artificial intelligence, but rather a crucial stepping stone toward systems that can partner with humans in solving the complex challenges facing our world.

Toni Santos is a machine-ethics researcher and algorithmic-consciousness writer exploring how AI alignment, data bias mitigation and ethical robotics shape the future of intelligent systems. Through his investigations into sentient machine theory, algorithmic governance and responsible design, Toni examines how machines might mirror, augment and challenge human values. Passionate about ethics, technology and human-machine collaboration, Toni focuses on how code, data and design converge to create new ecosystems of agency, trust and meaning. His work highlights the ethical architecture of intelligence — guiding readers toward the future of algorithms with purpose. Blending AI ethics, robotics engineering and philosophy of mind, Toni writes about the interface of machine and value — helping readers understand how systems behave, learn and reflect. His work is a tribute to: The responsibility inherent in machine intelligence and algorithmic design The evolution of robotics, AI and conscious systems under value-based alignment The vision of intelligent systems that serve humanity with integrity Whether you are a technologist, ethicist or forward-thinker, Toni Santos invites you to explore the moral-architecture of machines — one algorithm, one model, one insight at a time.